I have taken a look at the further development of the Winners and Losers of the Panda 4.1 Update. After all, both Google’s statements and the development of the data led to the conclusion that this is a slow, continuous rollout. That’s why we waited until we can say that the rollout is most likely to be completed. And we have in actual fact observed in many domains that this is not simply a one-time drop in SEO visibility, but in many cases an actual, continuous, step-by-step loss.

But this did not happen to all the domains. In some cases, Google has changed directions and losers of the first iteration have returned to their pre-Panda SEO Visibility values.

But this did not happen to all the domains. In some cases, Google has changed directions and losers of the first iteration have returned to their pre-Panda SEO Visibility values.

Are updates now continuous?

Has Google now integrated Panda into the algorithm so that we are no longer able to cleanly separate updates and their iterations from their impact, because their effect is permanent and continuous? In other words: Does Google tweak the algorithm until they are “happy” with the results? Hence these are query-based updates: do they just add new queries permanently?

There really is a lot of movement in the data. The as yet not fully tangible Penguin 3.0 Update (again, a slow rollout!) also plays a part in this, as it partially overlaps with this Panda.

However, I am also often asked how supposedly ‘bad sites’ are still able to hold their positions on the SERPs and why Google is so often simply unable to filter them out. It was at this point that I noticed something in the many examples from the loser list of Panda 4.1.

Panda losers: Out of the top rankings – out of mind?

Much too often we see only what catches our eye and forget that Google is really very effective at structuring search results by the quality of their content. And this is precisely what becomes clear when, for example, you stop concentrating on the (ever shrinking number of) pages that, in spite of their alleged lack of content, rank among the top SERPs (over the long term!), but instead take a look at the further development of the losers. Here, it is far too often a case of: Out of sight, out of mind.

Besides, Google has stressed that the update is a slow rollout over an extended period. And this is precisely what can be very well seen in the data.

These Panda 4.1 losers have continued to lose

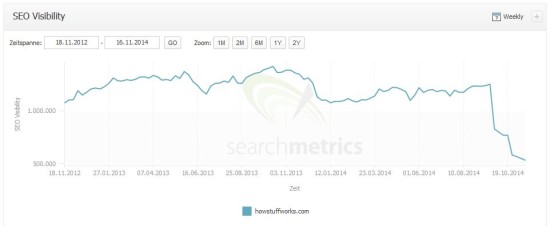

A retrospective view on the data around Panda 4.1 has allowed a recurring phenomenon to become apparent: many sites have continuously lost SEO visibility with every new data point. So, we are not dealing with a very sudden drop followed by a plateau, but with a continuous downward trend of individual pages lasting over several weeks.

Aggregators

howstuffworks.com

answers.com

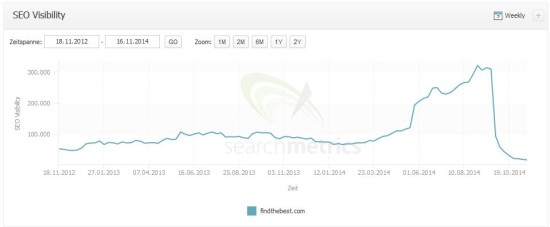

findthebest.com

yellow.com

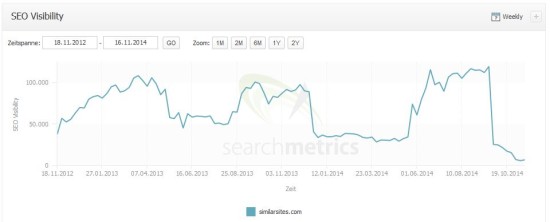

similarsites.com

… and there are many others I could list here.

As you may notice, some domains (i.e. findthebest.com or similarsites.com) have won in the course of Panda 4.0 first, but with the current iteration of Panda, they perform even worse than before.

Some initial losers are even back already

As I said, there are different characteristics in relation to the data around Panda 4.1. So there are some examples of domains that have lost SEO Visibility after Panda 4.1 first, but have now already recovered (in the course of the very same update cycle).

Domains with delayed drop

In the end, there are even examples of domains where the drop in SEO Visibility was delayed. Actually, some domains with delayed drops have been winners of Panda 4.0 back then. Now we see that this has most likely been a short time success.

Conclusion: Slow updates often cause continuous losses, as well as delayed effects

Of course, there are always exceptions, escapees, or recoveries of sites in which the relevance and quality of sites are questionable at a first (or even second) glance. Not every site from the loser list has taken this development. That is by no means what I’m trying to say and that is also why I have given some other examples.

But, from the selected examples, it is very clear to see that Google – particularly in terms of relevance and quality of content, which is ever increasingly in the spotlight – very specifically, with insistence and consequences, takes action if certain signals suggest that content is no longer of the quality that users may have expected. And this is something that Google is able to measure (for example with CTR, Bounce Rate or Time on Site).

In any case, the ‘new (user) signals’, with feedback that, according to Google, was a particular help in recognizing lesser quality content appear to have reached their goal. Long-term developments have not gone off target either.

Panda 4.1 seems to be complete for now

To come back to the original question: It seems that the current Panda update is now completely rolled out. At least the data is currently ‘settling down’. That’s why we have waited for the data to settle down.

Therefore, in conclusion, it should be said that:

- Panda 4.1 took around 5-6 weeks and often meant a continuous loss for many domains,

- the rather slow rollout process has led to the fact that some domains have recovered pretty fast after an initial drop (looks like Google has “rolled in” the Panda again in some cases) and sometimes the impact was delayed.

To sum up, the Panda 4.1 update is pretty multifaceted and not that easy to retrace. I am intrigued to see if this is the kind of update we can look forward to in the future.