Crawling websites, analyzing them technically and optimizing them based of these findings is standard procedure when it comes to improving online performance. Today, I would like to introduce a new, data-driven solution for optimizing internal link structure: Searchmetrics Link Optimization.

On-page optimization is multi-layered: one layer is analyzing individual subpages in order to identify errors and optimization potential, i.e. monitoring and improving the core elements of your domain. Site Structure Optimization serves exactly purpose and Visibility Guard automatically monitors your domain for corresponding errors.

Why are internal links important?

At least as is important as this are the connections between these core elements that make your site a stable construct and offer an optimal user journey. An optimal internal link structure gives users – and Google – a roadmap, leading them logically around your site to their desired destination.

Both Users and the Googlebot navigate your website via internal links.

If a website is internally linked like a labyrinth – or completely overwhelmed with links – users and The Googlebot will get lost. It is hard to recognize what is important, as there is no clear structure and visitors will bounce.

But this does not have to be the case. Optimizing internal link structure helps improve the user journey. It also helps Google to recognize related context and content with a clean link structure.

Websites and link graphs

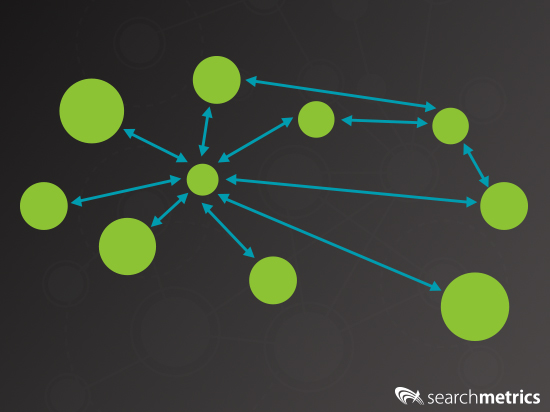

In order to help you understand how powerful our Link Optimization is I want to briefly introduce you to the world of link graphs. Imagine a website with several hundred subpages. Every one of these subpages represents a node on our graph.

It is overwhelming to look at and it is impossible to create an overview. Let’s reduce this to 10 subpages.

If a link exists between two subpages we can represent this in our graphs with an arrow between the corresponding nodes.

If we do this for all links between all subpages, then we have a complete link graph.

Why are graph representations relevant?

Good question. Many algorithms are based on graph representations. For example:

- The Hiltop algorithm from Compaq System Research (now owned by Google) identifies, based on a group of experts, their authority on a specific topic.

- The HITS algorithm from Jon Kleinberg (supported by Google and Yahoo, among others) finds hubs and authority.

- PageRank form Google identifies the most popular websites.

- TrustRank from Yahoo identifies spam and non-trustworthy websites.

- CheiRank finds hubs, nodes via which outgoing links are well linked.

For years such algorithms have formed the basis of many search engines. SPS from Searchmetrics is based on observing websites and links.

The status quo for link structures

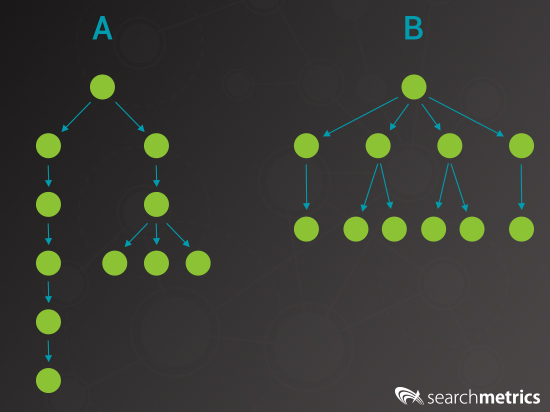

There are many solutions on the market that analyze internal link structures and identify well or badly linked subpages based on one the techniques mentioned above (or an adaptation of one). Some of these tools are also capable of improving the distribution of link juice (link flow – imagine a pyramid of champagne glassed being filled from the top).

But what do we mean by improve the distribution? If I look at the link graph above then this just means more evenly. Of course subpages from website B (see above) will on average have a better link juice distribution than website A. And overall website B will rank better than website A. But is this really the optimal solution?

What does Link Optimization do differently?

Different to other providers we do not only take internal link structure into account. We enrich this data with data from your projects in the Searchmetrics Suite or with unique data from Content Performance or our Research Cloud. With the help of information such as search volume, click price, current rankings, search intention etc. you can easily identify problems and optimization potential that is truly valuable.

A website with two million subpages will always have optimization potential. But here – as so often – the Pareto principle holds true: to fix 20% of problems results in 80% more visibility (and revenue). To efficiently use your time and optimize in a targeted, effective way you need to know which problems are most relevant. Link Optimization makes exactly this possible:

What can I do with Link Optimization?

Let’s talk about the two most common use cases:

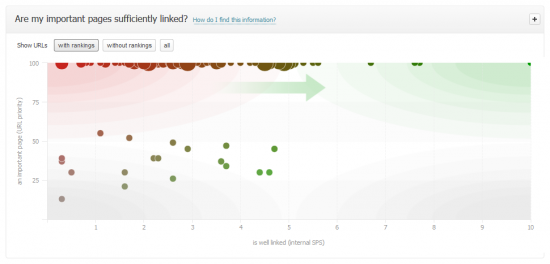

Use case 1: identify badly linked pages with high traffic potential

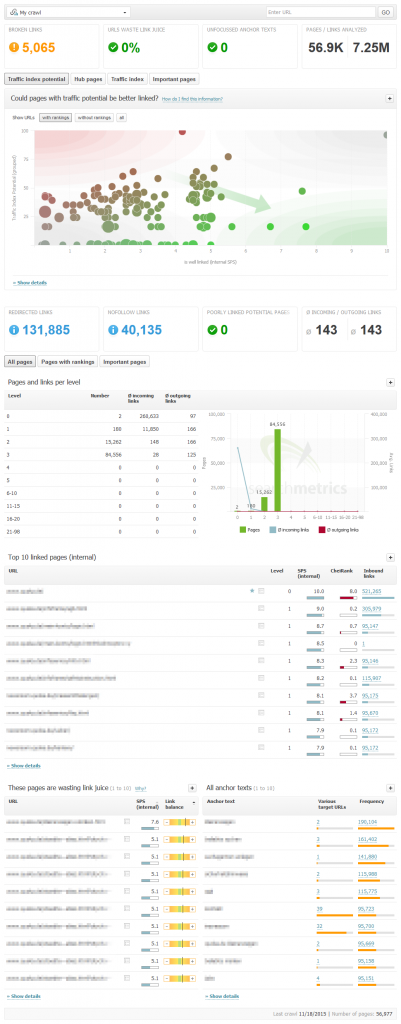

Do you want to know which pages are most important and should be linked better to improve their performance? Let’s look at an example from Link Optimization. Here is a representation of pages that are badly linked sorted by traffic index potential:

Advantage: You don’t have to manually calculate the revenue and rankings data of all of these pages. We have already created and compiled this list for you. We sort pages directly by potential. This way you can focus your optimization strategy on the most important pages.

Why is traffic index potential so important?

Using anything other than potential would risk placing links on subpages where:

- Potential is already exhausted, i.e. pages that already rank well for their topic

- There is no potential, as their problems are content based rather than internal link problems

- That only rank for valueless keywords.

In all three cases, it would be a waste of time to add more internal links to these pages.

Use case 2: identify hub pages and correctly distribute their link juice

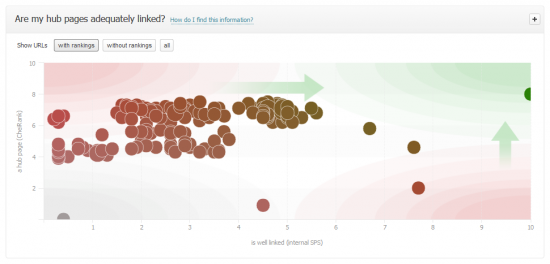

Do you want to know which pages are suited as hubs or “link-givers”? Link Optimization gives you a list of URLs that should link to other pages more heavily – and all this represented as a chart:

Advantage: This chart shows you at a glance how balanced the current internal link structure is and where the greatest optimization potential lies. Particularly for agencies this is a massive time-saver. In-house SEOs can also rapidly check whether they should optimize internal link structure or whether other areas or more important.

Bonus: Link Optimization also allows you to find and remove links that link to redirects or 404 error pages.

Summary: Optimize link structure with data-driven analyses

Particularly for large websites it is almost impossible to create an overview of the fundamentally important internal link structure. The amount of data that needs to be monitored is overwhelming; this analysis wastes valuable time and is so complex that current tools are simply incapable of doing this.

Searchmetrics Link Optimization enriches data from link crawling with relevant information and offers intuitive visual representations of where the greatest optimization potential lies. You save time and get active recommendations, meaning you can achieve an optimal link structure for your subpages.

Credit system for Link Optimization

Link Optimization crawls are based on the credit system used in Site Structure Optimization (SSO). If you want to run a landing page through the SSO crawler then this costs one credit. If you use SSO and the new Link Optimization, this costs two credits per landing page. So you can test Link Optimization, such crawls will only cost one credit per URL till the end of February 2016.

Please note: Link Optimization is exclusively available to holders of Suite Enterprise or Ultimate licenses 2014/15

Find out more about Link Optimization

As always we would love to hear your comments and opinions below.