Google Updates don’t come around on a fixed date every year like Christmas or St. Patrick’s Day. You don’t even know approximately when to expect them. What we do know is Google is never finished; they won’t stop tweaking their algorithm, and we won’t stop trying to analyze and interpret the impact of these updates. Last week, on 12th March, Google announced an update to its core algorithm. We’ve taken a look at domains that saw changes in their SEO Visibility and found some interesting patterns that suggest websites must do even more to update their game with unique and interesting content.

Not all updates are born equal

When we use the term “Google Update,” we generally mean larger, far-reaching changes to the search algorithm. These can target either particular, easily-recognizable aspects of websites (e.g. HTTPS) or they can be less clear-cut and reward characteristics like “quality.”

Google actually makes updates to its ranking algorithms on a daily basis. Experiments are conducted automatically that sometimes only stay active for a few hours. These may not even be noticed by many webmasters. Or they are noticed and cause a quick panic, before everything goes back to normal a day later.

Source: @dannysullivan, Twitter.

Let there be an update

A few times a year, Google’s engineers carry out larger updates that can have far-reaching impact for many webmasters and SEOs. With no notice whatsoever, traffic to their website go up or down.

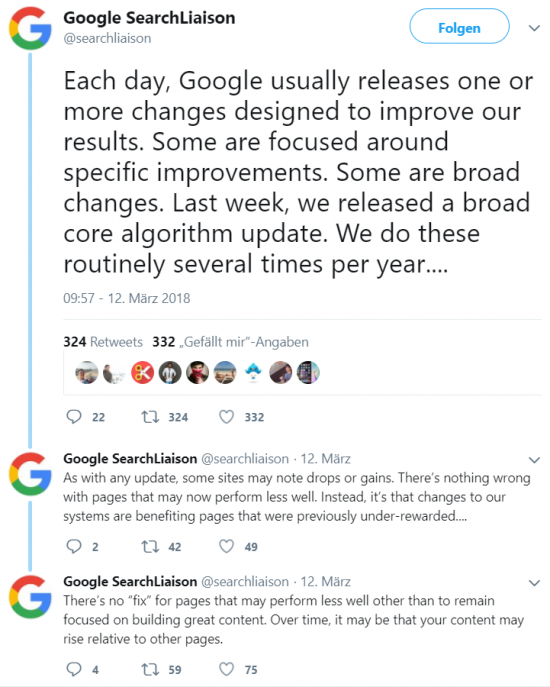

In some cases, these updates are even confirmed by Google – as we saw on the 12th of March. Via its official Twitter account, @searchliaison, which Google created November 2017, the search giant confirmed what it described as a “broad core algorithm update.” To little consolation for Visibility losers, it said did not explicitly targeted unwanted SEO techniques.

Google’s take is that websites who have suffered a hit from this update, are not being punished in any way and that there is not (necessarily) anything wrong with these pages. So perhaps on the SEO front, there is nothing that needs “fixing.”

Rather, Google has improved the quality and/or relevance of the search results for queries so that pages that better meet the search intent for a keyword have improved their rankings and gained SEO Visibility. Google’s further explanation struck a familiar chord: To make up any losses in rankings, websites should build great content.

Source: @searchliaison, Twitter.

Searching for sense

As Google’s statements suggest, there are not a great deal of obvious commonalities amongst all losers or all winners – it’s not easy to spot a pattern. This isn’t an update that directly deals with a particularly page feature like load time or HTTPS. It’s more – if you believe Google – that the algorithm has been adjusted to better recognize awesome content for different searches.

We’ve taken a closer look into the data and found that, at least for some of the most heavily impacted sites, there are clear parallels. These become particularly apparent if we look back a few months.

Rewind to November 2017

In mid-November 2017 there were rumors and chatter in SEO forums and on Twitter about a (smaller) Google Update that was never subsequently confirmed by Google. The community has taken to calling such changes that have a large impact “Phantom” updates.

Confirmed or not, the consensus seemed to be that something did happen in November 2017.

Back to the present

If we focus our microscope on the winners of the latest Core Algorithm Update, then we see that there was already an increase in SEO Visibility in the week to 19th November. There isn’t a significant increase for all winners – but in hindsight and considering the wider context, there is an undeniable pattern.

The question is: Did Google do a low-level test at the end of last year of some of the changes that they have now rolled out on a larger scale? An analysis of winners’ Visibility data points to a resounding yes.

The pattern of the same domains being affected by several updates is also familiar from other update series. For example, many domains were affected by the Phantom V Update in February 2017 that had previously seen their SEO Visibility impacted by earlier iterations of the Phantom.

Not everyone’s in Google’s good books

If some domains have gained SEO Visibility, then it is only logical that some will have lost out. There is only one No. 1 ranking on each SERP. Looking at loser domains, we don’t see as many examples of parallels to the SEO-Visibility fluctuations in mid-November.

This comparative lack of parallels amongst the losers is not unexpected. Google indicated that this wasn’t a penalty update and that the losers weren’t doing anything wrong – so that the domains didn’t need repairing per se. Google is concentrating on rewarding strong content or relevance, which – even without any intent to deliberately penalize – will unavoidably see some domains ending up on the loser list.

One serving of great content, please

Of course, being told they haven’t done anything wrong will likely not do all that much to cheer websites that have lost SEO Visibility. However, Google’s ultimate goal isn’t rewarding or punishing any particular website – it’s providing users with search results that best meet their search intents and that answer their questions as well as possible.

A good ranking is only really useful if the page behind it also provides the content people are looking for. Otherwise, there’ll be few clicks and even fewer conversions. If an algorithm update causes a page to lose rankings like this, then it is quite possible that even though the overall SEO Visibility may drop, the long-term traffic will remain stable or at least only lose “unwanted” traffic i.e. traffic from users who aren’t generally happy with the result as an answer to their search query. With updates, Google is trying to create search results pages with those results at the top that best serve the needs of the user.