In this article I show how Google’s Panda update is a more stable part of the continuously modified algorithm than many may think. Here I examine victims of different Panda iterations, explain the concept behind them and go into detail about a few cases.

Although it’s not clear whether the update has been completely rolled out yet, the recent and long awaited release of Penguin 3 created way less noise than originally expected. Many marketers forget that the roll out of updates happen over time versus just a couple of days. This is especially the case with Panda, the most present quality algorithm in Google’s playhouse.

It’s important to distinguish between a Panda update and iteration. An update like, Panda 1 or Panda 3, means that the factors influencing the Panda algorithm have been renewed or changed. A Panda iteration means the algorithm has been applied to the Google index and is being released or deployed like Panda 4. Since 2011 we know that Google is iterating its Panda algorithm monthly. This means that the algorithm is updated on a specific day each month and then slowly rolled out. Certain iterations bring a greater impact, where others are hardly noticeable. Panda updates on the other hand happen more irregular, probably based on how well the algorithm can keep up with the development of the Internet.

The simplest way to think of it is like this: An update is like a new version of a car where as an iteration would be the yearly production.

Overview of Panda updates:

The first Panda update took place on February 24th, 2011. Since then, three more updates have occurred, each impacting Google’s overall algorithm:

Panda II – April 11th, 2011

Panda III – October 19th, 2011

Panda IV – May 19th, 2014

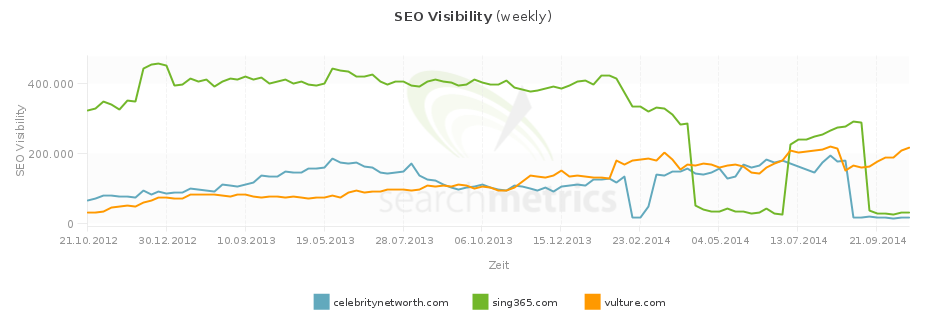

By looking at the SEO Visibility in the screenshot below, you see three sites that were recently hit by a Panda iteration. Here you can see these three sites all lost SEO Visibility within 1-2 weeks: vulture.com on August 24th, celebritynetworth.com on August 31st and sing365.com on September 14th.

Other sites affected by the Panda 4 iteration on August 24th and that saw changes in their SEO visibility, besides vulture.com, include:

- Dealcatcher.com +118% (Panda 4: -50%)

- Globalpost.com +113% (Panda 4: -75%)

- Serviceguidance.com -80% (Panda4: -75%) This domain is now redirecting to guidancesite.com and has increased by a lot of SEO Visibility. It appears this relaunch / restructure has helped them to get out of the algorithm penalty.

- Delish.com +23% (Panda 4: -50%)

- Cheapflights.com +125% (Panda 4: -33%)

- Newseum.org: -38% (August 31st: -57%)

- Phonearena.com: -22%

- Elitedaily.com: -41%

- Giphy.com: -30%

- Thetoptens.com: -73%

- Findagrave.com: -45%

- Howtogeek.com: -25% (Panda 4: -36%)

- Extendedstayamerica.com -73% (Panda 4: -36%, July 20th: +72%)

- Roomstogo.com: -35%

- Mp3.com: -21% (Panda 4: +63%)

- Skyscanner.com: -23%

- Petplace.com: -62% (Panda 4: +94%)

- Onlineworldofwrestling.com: -57% (Panda 4: +84%)

- Steampowered.com: -50% (Panda 4: +68%)

- Librivox.com: -47%

- Franchisedirect.com: +27% (Panda 4: +75%)

- Buzzsugar.com: +82% (Panda 4: -41%, July 6th: -85%)

- Dealigg.com: +115% (September 14th: -75%)

Newseum.com was also affected two weeks in a row, but they aren’t the only ones. More domains were affected one week later, around the same time as celebritynetworth.com:

- Holidays.net: -91% (Panda 4: +74%)

- Hourscenter.com: -88%

- Drugabuse.com: -81%

- Medicalnewstoday.com: -64%

- Nationalreport.net: + 4,698% (July 6th: -94%)

I’ve also found a bigger chunk of domains that were affected on September 28th:

- Aceshowbiz.com: +62% (Panda 4: -75%)

- Yourtango.com: +73% (Panda 4: -75%)

- Dealcatcher.com: +25% (Panda 4: 50%)

- Livescience.com: +52% (Panda 4: -50%)

- Webopedia.com: +29% (Panda 4: -50%)

- Simplyrecipes.com: +43% (Panda 4: -33%)

- Digitaltrends.com: +35% (Panda 4: -50%)

- Health.com: +44% (Panda 4: -50%)

- Spoonful.com: +69% (Panda 4: -75%) This domain is now redirecting to family.disney.com

- Songkick.com: +21% (Panda 4: -75%)

- Realsimple.com: +29% (Panda 4: -33%)

- Appbrain.com: -59% (Panda 4: -33%)

- Techtarget.com: +23% (Panda 4: -33%)

- Rd.com: +77% (Panda 4: -75%)

- Chow.com: +31% (Panda 4: -33%)

- Doxo.com: -36% (Panda 4: -50%)

- Heavy.com: +35% (Panda 4: -50%)

- Csmonitor.com: +24% (Panda 4: -33%)

- Parenting.com: +30% (Panda 4: -50%)

- Whatscookingamerica.net: +27% (Panda 4: -50%)

- Columbia.edu: +10% (Panda 4: -20%). This is a really interesting one. It shows that .edu Domains don’t have a free pass to do what they want anymore. It almost seems as Google has lost trust in them – not from a spam perspective, but an “adding value for users” perspective.

- Internetslang.com: +24% (Panda 4: -33%)

- Ibiblio.org: +48% (Panda 4: -50%)

- Mybanktracker.com: +28% (Panda 4: -50%)

- Totalbeauty.com: +40% (Panda 4: -50%)

- Glamour.com: +32% (Panda 4: -50%, July 20th: -12%, August 17th: -25%)

- Foursquare.com: -28% (Panda 4: 11%)

- Hotelguides.com: +75% (Panda 4: -67%)

- Wisdomquotes.com: -98% (Panda 4: +356%)

- Franchisehelp.com: +58% (Panda 4: -50%)

Notice the high amount of sites that were already affected by Panda 4 and have now gained (nationalreport.net, rd.com) or lost visibility (wisdomquotes.com, holidays.net). These are perfect examples of why the statement: sites that have been affected by a former algorithm penalty are more likely to be affected again with future updates, is true. Think of a blacklist. Even though we’re dealing with machines and numbers here, imagine you would have lost Google’s trust in your site and now have to rebuild it. This is not only the scenario with Panda, but all other manual and algorithmic penalties as well.

Most iterations in the lists are not too severe, meaning we’re dealing with losses of around 50%, rather than 75-90% of SEO Visibility. However, keep in mind that Google does hide manual penalties behind algorithm penalties, so it is possible that some sites were not actually affected by Panda, but manually impacted.

Let’s take a closer look at a couple of Panda victims:

- Spoonful.com is a great example of how a site that lost SEO Visibility due to a Panda update experienced a short increase before they were hit a second time. It seems as if Google is giving these sites another small chance, but and then realizes nothing has changed.

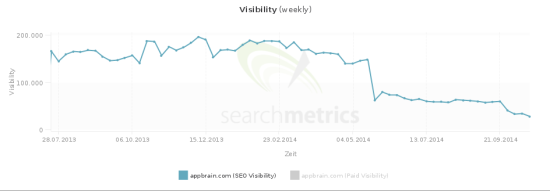

- This is not always the case. Appbrain.com was hit by Panda 4 on May 19th (59%) and again on September 28th 2014 (33%). In this case rankings steadily declined. The second drop isn’t that hard but the decline towards 0 increases over a longer period of time.

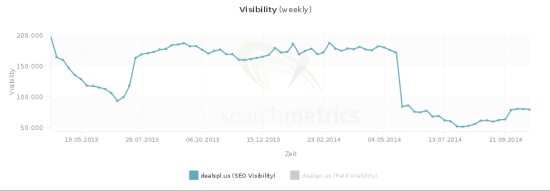

- An example of a soft release is Dealspl.us: On September 28th they regained some of their Visibility but did not recover 100%. Apparently their efforts have not been good enough, but better than before the Panda 4 update.

- Reader’s Digest (www.rd.com) is a nice example of a site that has almost fully recovered. It’s difficult to say whether the site has relaunched or just changed it’s structure and consolidated content, but the efforts seem to have paid off.

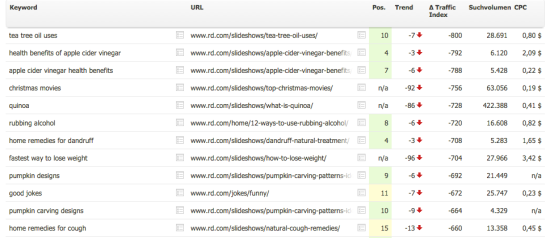

- What we do know for sure about rd.com is that they lost massive rankings within the /slideshows/ directory (see screenshot).

Slideshows are a series of pictures, usually one per URL with only a few sentences on the page. This is a Panda trap that a lot of publishers get caught by due to the thin content. Not to blame anyone, but they are / were often used to increase the amount of page views in order to make more money with ads.

Having tested the URLs that lost rankings manually, Reader’s Digest now redirects them to consolidated articles, meaning the pictures and content have been consolidated onto one page / URL solving the thin content issue.

Google is looking for as many iterations as possible, because Panda is an update that’s supposed to improve the quality of SERPs. Comparable to the Google Dance (yep, there was a time when results weren’t updated continuously but in waves), the ultimate goal for Google is to integrate Panda into a live algorithm.

Penguin is not being iterated as much, since the update is meant to fight spam. Without diving too deep into the issue, there are three assumptions I want to throw into the ring:

- Since it’s not needed as much, it doesn’t seem to make sense to have it run continuously

- The algorithm is too heavy. Therefore it cannot be rolled out that often

- Its implications demand analyses, which take a lot of time. Since this is destructive for businesses, Google tries to be careful

Key takeaways:

- Once you’ve landed on Google’s blacklist, your site is more likely to be judged with less tolerance

- Updates / Iterations are being rolled out over longer periods of time

- There are different types of recovery: immediate restoration of rankings, slow recovery and partial recovery

If your website lost visibility on one of the mentioned iteration dates, make sure to review it according to the factors mentioned in the article “5 steps to definitely get a panda penalty”.

Have you noticed similar developments?