Ever since the introduction of the Panda Update, affected publishers have been scrambling to recover rankings and search traffic.

One of the most outspoken publishers is the content farm hubpages.com, which lost 80% of its visibility in Google US search results in the initial rollout of Panda. Their CEO dealt with the situation aggressively, publically stating his efforts for improvement and trying to get in touch with Google employees.

According to the WSJ , this was met with success in Mid-July – the paper claims that “In June, a top Google search engineer, Matt Cutts, wrote to Edmondson that he might want to try subdomains, among other things.”

To most SEOs, this comes as a surprise. For years, adding folders to an established domain tended to perform much better for most people than adding subdomains – an opinion that was perpetuated by Matt Cutts himself, as Aaron Wall explains in great detail.

Matt Cutt’s statement prompted Hubpages to conduct a limited test at first , in which only a small amount of content was moved to subdomains (sorted by author or content quality). After considering it finished, they are now rolling out subdomains for all authors . Using the Searchmetrics Suite (the new filtering options in particular) these tests can be examined.

How does content on new subdomains fare?

Generally, the test of moving some of their content has turned out quite nicely for hubpages. Interestingly, the effects seem to be dependent on content quality.

- Subdomains for high quality authors led to improved rankings

For some of hubpages’ best rated authors, the move to a subdomain has yielded a massive improvement in rankings. An example: the visibility for „jennifer aniston hairstyles“ has improved after moving the article to robin.hubpages.com – even surpassing the ranking before Panda.

Rankings also improved for Keywords such as „how to make a yogurt“ and „itune“ – this specific author even confirms a rise in traffic in his Google Analytics data.

- Curated Content (not aggregated for a single author) seems to perform not as great, but still significantly better than post-panda.

the-best.hubpages.com was used to aggregate what seems like above average content – and content that was moved there improved its rankings.

Something to keep in mind here is that most of this content had seen drops in the Panda update, but could still sustain Top 20 rankings (as opposed to becoming invisible). Google apparently rated this content “above average” even before the switch to subdomains. There is only one example in which a ranking could be recovered that was gone completely before the test.

- Mostly thin content was published on writers.hubpages.com (which was also a scenario they planned to include in their testing ).

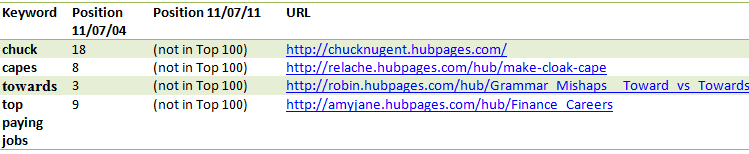

Content in this area mostly didn’t improve its rankings after moving. The best keyword in our database sustained its ranking. Other keywords covered in this area of the site tend to be equally sub-par.

Standing the test of time?

Some of the previous keyword graphs show a decline after the first week(s) on the new subdomain. This pattern seems to repeat for a number of keywords in much more severe way, i.e. :

As of now, it is still early to tell if this is Panda rearing its head again, or just usual changes following a content relocation / link signal redirection.

Conclusion

-

The data is still inconclusive about whether subdomains can reverse the effects of Panda.

The next iteration of Panda in the US will be very interesting for every search marketer, since this will show how the Panda algorithm rates old content on a new subdomain.

- Putting above- and below-par content in different parts of the website seems to have a significant positive effect.This might be one of the important lessons from these developments – if Google can easily distinguish good and bad content areas using the URL structure, good content might be exempt from penalties.However – you probably do not have to utilize subdomains to structure your content. I think that other, more popular options might also be viable – especially in markets that have not been hit yet with the Panda update. A clear-cut folder structure, e.g. separating automated and manual content is very likely to be sufficient (which hubpages does not employ, they store all content in the /hub/ – directory).

- Adding considerable amounts of subdomains seems to be much less problematic than what SEOs have previously come to believe (and was even specifically recommended by Matt Cutts in this case)Yet it is still very likely that similar content benefits more from being in themed directories . My best advice is “do not try this at home” – your run-off-the-mill site will probably be treated differently from a domain that is closely being watched by the Google Web Spam team.

P.S.: Who’s writing this stuff? I’m Sebastian Weber and I’m an SEO Consultant with Searchmetrics. When I’m not busy writing blog posts, I’m optimizing websites throughout the internet and trying to drill down on all sorts of different things on- and offline.