With Site Structure Optimization (SSO) we provide users of the Searchmetrics Suite with an overview of the technical performance of their web projects. Whether it’s a question of titles & descriptions, crawling errors, loading times or link distributions: we analyze your websites, list all the information that is relevant for you – and give you the ability to directly implement appropriate optimization strategies. SSO is a very powerful and intelligent module to help you with on-page issues of your site. Here’s how it works: part 1, the setup.

Why Site Structure Optimization?

For professional users of the Searchmetrics Suite, who use us to track & monitor their projects, SSO offers detailed options for crawling and onpage/technical analyses of sites.

In addition to detailed evaluations of titles/descriptions, a large number of other key performance indicators can be analyzed, e.g.:

- pages by level analyses,

- the distribution of internal and external links,

- link target analyses,

- HTML status codes,

- noindex and canonical tags and also

- loading times.

In this way, individual URL’s or URL groups can be analyzed and optimized in order to achieve the best possible modulation of the site in terms of technical, structural and search-oriented parameters.

How does the crawler work?

So that your server is able to cope with the additional traffic generated by our crawler during a site analysis and in spite of this can still serve all the users of your site with rapid loading times, we use “intelligent crawling”. This has several significant advantages:

- The number of parallel requests is increased or reduced depending on the website’s response rate. It is thus virtually impossible for the website to be blocked. If the server slows down, we reduce the number of parallel requests. This creates greater security than with a fixed number of parallel requests. If, on the other hand, the site is fast, then we crawl the site at a higher rate so that the results are available sooner.

- Even after a crawl has been started, it can be interrupted manually at any time. On the one hand, the analysis can be stopped if there are unexpected problems with the website performance. And on the other, an “I need the data by this afternoon” analysis can be performed, as all previously crawled pages are already available in the Site Structure Optimization on completion of the crawl.

As a predefined user agent, our crawler uses the “Searchmetrics Bot” – unless otherwise specified in the setup. The URL’s are processed in hierarchical order. Beginning with the land page, all links are identified and processed level-by-level.

What does page level mean?

The level of a page can be interpreted in two ways:

- Firstly, the number of slashes (“/”) in the URL that indicates the sub-directories.

- Secondly, the number of clicks required to get from the landing page to the target page.

In the context of Site Structure Optimization, level is taken to mean the number of clicks needed to get from the homepage to the target page. In our experience, this is a better representation of the URL status in terms of linkage and page structure than the number of slashes by itself. If, for example, the URL has a complex structure indicating integration in a sub-sub-subdirectory, it might be a good idea to consider a direct internal link from the homepage to this URL, if it is wished to increase this page’s prominence.

The URL groups can be used for evaluation of URL’s by slashes (“directories”). Off-the-peg templates are available for this purpose so that the results for a crawl are grouped on the basis of the number of directories.

The SSO setup in detail

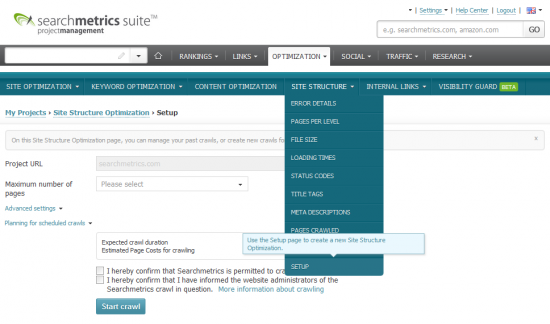

If you have created your own project in the suite, a new site structure analysis can be created with just a couple of clicks. Simply click the “Optimization” tab, select “Site Structure” and select “Setup” in the fold out menu.

Something to remember before we go into the details: User help is always available for every setting in the tool tip. To access the help, simply move the mouse over the input box and the corresponding context box with explanations and tips will appear.

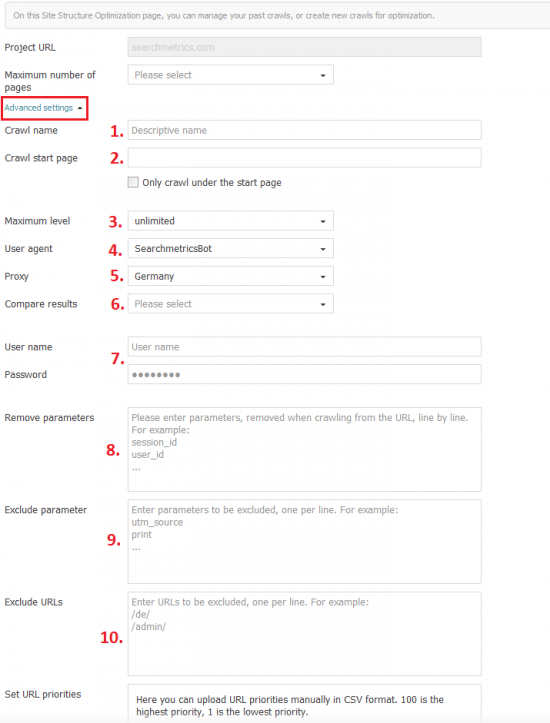

The Project URL briefly shows for which of your projects you are currently running a site structure crawl. This can be useful if you have created several projects in the suite. Note: Alternatively, it is also possible to enter a starter URL. If the project www.domain.com/de/ has been entered but this cannot be accessed in this form, then simply enter www.domain.com/de/start.html as the start page.

With the setting Maximum Pages, you can define a limit for the number of pages to be crawled. Furthermore, you can use this setting to manage your server load and also calculate the credits for a crawl. If the predefined number of pages has been crawled, the crawl is stopped. Exception: If a maximum level has been specified, this will be reached before the crawl is stopped. By the way, if the actual number of pages is lower than the set number, the difference will be credited to your page credits. In other words, you only pay for what we actually crawl.

Advanced Setup

- 1. Crawl Name: Especially if there are many crawls for projects, it is important to give the individual crawls names that are as distinctive as possible.

- 2. Crawl Entry Page: This box is empty by default; this means that the crawler starts on the homepage / the specified project URL: If you want to crawl your individual directories, enter the respective URL’s in this box and activate the checkbox “only under the start page”. How about a quick demo? Let’s take searchmetrics.com as an example. We’ll select the following as start page: www.searchmetrics.com/knowledge-base, only the pages in the subdirectory/knowledge-base from www.searchmetrics.com are crawled.

- 3. Maximum Level: The page level is defined by the number of “clicks” from the homepage (or start page). Level 1 pages are directly linked to the homepage, level 2 pages are directly linked to level 1 pages (i.e. two clicks away from the homepage). If a maximum level is defined and reached, the crawl is terminated. URL’s at lower levels will in that case not be crawled.

- 4. User Agent: The User Agent is used by the crawler to identify it to the website and is sent along with every request. The default setting is “SearchmetricsBot”. The User Agent can be adjusted to find out how the page appears to the Google Bot.

The same also applies for Mobile Bots – if you wish to know how the page appears to a Mobile Device. - 5. Proxy: Normally, we crawl from Germany. However, some websites – based on the origin of the request – forward requests to the localized version. We have a solution for this and use proxy servers, for example. If a U.S. proxy is used, then the website with a U.S. IP address is crawled. The list of available proxies is being gradually extended. If you need help with this, simply contact support.

- 6. Compare Results: There is the option of comparing results from two crawls. In this case, trends in relation to the previous crawl are shown on the evaluation pages. Note that results are not compared by defaults as crawl setups may differ – and a comparison only makes sense if the setup is (almost) identical. For scheduled crawls (see below), a comparison with the previous crawl is always performed. The “Compare Results” setting is therefore not required for scheduled crawls.

- 7. User Name / Password: This is a function for crawling in password-protected test environments.

- 8. Remove Parameters: There are some parameters that should be ignored during crawling (e.g. Session_id). Each parameter to be removed must be entered on a separate line.

- 9. Exclude Parameters: In contrast to removal of a parameter, exclusion makes it possible to exclude URL’s from the crawl. Thus if the Bot finds a URL with a defined parameter, this page will not be crawled.

- 10. Exclude URL’s: This option serves as an extension to “Exclude Parameters”. With this option, entire directories or sub-domains can be excluded from crawls.

SSO Setup: Scheduled Crawls

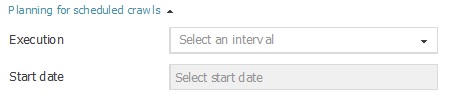

Scheduled crawls support regular crawling of a site according to custom requirements. In contrast to site optimization, the crawler can be very specifically controlled with an extensive setup without needing to forgo regular repetition.

- Execution: This option defines how frequently a crawl should be performed. The options range from daily to quarterly. If no interval is specified it means that the crawl is only executed once. Of course, the more pages are crawled, the longer it will take to complete the crawl.

- Interval Selection:

- Every week / every second week: The weekday is fixed. If, for example, you only want the crawl to start on Saturdays, select weekly/every two weeks and set the start date to a Saturday.

- Every Month / Every 2nd/3rd Month: The calendar date is fixed. If, for example, you want a crawl to always start on the first of the month, select the one month interval and define the start date as the 1st of the following month.

- Start Date: The start date indicates when the scheduled crawl will be performed for the first time.

- For a weekly interval, the weekday is definitive for setting up the crawls. If Saturday is entered, the crawl will be started every Saturday.

- For a monthly interval, the calendar date is definitive for setting up the crawls. If the 15th is entered as the start date, the crawl will always be performed on the 15th of the respective month.

More Info About Site Optimization

Do you have any questions, suggestions or comments on Site Structure Optimization? I look forward to hearing from you!