Everyday countless webpages are evaluated by Google quality raters (with the goal of improving the overall quality of search results). On 12 November 2015 Google updated its comprehensive guidelines (Quality Rater Guidelines), according to which webpage and content quality is evaluated. Not long after this we began to notice significant movement in our ranking and visibility data. Could we perhaps be witnessing the emergence of Phantom Update III? It certainly appears that search results have been adjusted in line with the guidelines. So let’s have a closer look at the data.

Updated guidelines: User intent is centrally important to quality

The Quality Rater Guidelines are important because they give us an insight into how Google defines low and high quality pages. As we know, in early summer we witnessed the effects of Phantom Update II, where some sites suffered significant losses in visibility. However, certain factors which perhaps would have led to losses post Phantom II seem to have been redefined. This means that some sites that lost out heavily have now seen a recovery in traffic. At the core of assessing the quality of a page is user intent and how well user expectation is fulfilled by the results. Let’s take a look at some examples affected by this update.

Assessing the quality of a webpage and its content

1. Low quality content

Content that does not provide the user with an adequate amount of information, or that does not correspond strongly enough to the search query/user intent could be classed as low quality. It seems that category pages in particular are affected by this. A category page is a hub page that gathers information from numerous subpages; products with links at the top and content below. The Quality Guidelines clearly state that supporting content has to correspond to the central user intention.

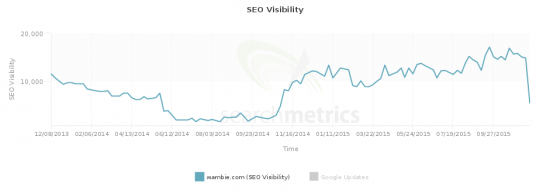

Example: wambie.com

In the above example, the games category page offers effectively no information about the games the user is expected to click on. This clearly does not match the user intention and this is confirmed by the significant drop in visibility. Under the Quality Guidelines, this category page would be classified as low quality.

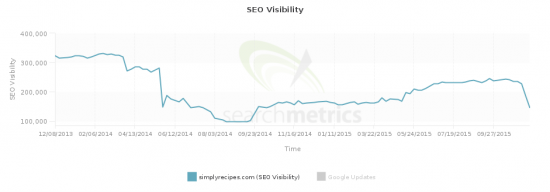

Example: simplyrecipes.com

This recipe site typically features very long and often unrelated intro texts to the recipe. It seems that the fact that this content does not match the user intent, i.e. to read/ acquire a recipe, is reflected in the recent loss in traffic.

An example of this is the cheesecake recipe featured on this domain. Here the intro text is long and not related to the recipe. Pages where the supporting content clearly matches the user intention seem to have benefitted from this update. The content of this cheesecake recipe at myrecipes.com is much more relevant to the user intention and the visibility of this page has correspondingly increased.

Example: thekitchn.com

Probably the best example for a recipe website loved by Google is thekitchn.com. After an already impressive rise in SEO Visibility over the last few months, the Phantom 3 Update has given thekitchn another ranking boost.

This success is for the most part owed to their long-form content approach. They regularly update and expand their recipes, which leads to very holistic content pieces that perform well for thousands(!) of keywords. It is important to notice that this content is not simply long. It is comprehensive and fulfills the user’s information needs.

2. Duplicate content

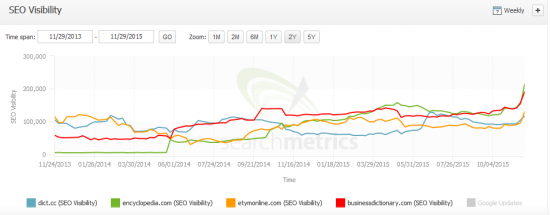

While the Phantom Update II punished pages that exhibited duplicate or very similar content, the new guidelines say that for specific topics duplicate content is no longer a problem. Google offers the example of song lyrics: the text always remains the same, but this content is not duplicated. Users can compare the accuracy and quality of presentation of the results they find.

The same logic seems to apply for dictionaries. After the Phantom Update Merriam Webster witnessed a 13% loss in visibility, among the biggest losers. Following this update, however, Merriam Webster is the single biggest winner in Google US search results, gaining 398,016 points in SEO Visibilty. This increase almost recovers the loss in visibility due to the Phantom Update.

Example: Merriam Webster

This trend could be observed across other dictionaries as well:

If you want to see if you were affected by this update check your site’s SEO visibility for free here

So even if content is very similar, the quality can still greatly differ, depending on how well this content matches and fulfills user intent. This refined definition of duplicate content could mean that other branches experience similar gains. We will keep a close eye on this over the coming weeks.

3. Brand keywords

Another area negatively affected by this update seems to be “foreign” brand keywords. Based on the quality guidelines, the cause for this could be user intent: If a user is searching for a particular brand, their intent is very clear. Landing on affiliate pages featuring brand keywords of the search query could mean that the user intent/ expectation is not satisfactorily met.

Example: allkeyshop.com

In the example above, users are first directed to affiliate pages when they click on product pages. This may not offer an optimal user experience.

Conclusion:

According to our data, the updated Quality Guidelines from Google do seem to be affecting rankings. Sites that adhere to these guidelines seem to be safer from ranking losses. Perhaps the data from the quality raters has been fed into RankBrain, Google’s recently announced machine learning module, as training data. But as this update appears to be global an update to the algorithm is also a possibility.

Google has not officially confirmed that an update has been carried out, but our data suggest that the algorithm has been adjusted to bring its results in line with its new quality guidelines. Over the next few weeks we will keep a watchful eye on rankings and visibility data and see how this continues to develop.

As always we would love to hear your comments and opinions below.