Google is loosening its chains: Thanks to Penguin 4.0, numerous websites, which had previously been targeted by the filter for web spam or their backlink structure, have now seen a considerable increase in their SEO visibility. Backlink auditing, negative SEO and link reviews – these are the areas where we can expect Penguin, now integrated into Google’s core algorithm, to have long-term impact.

Penguin updates, the first of which was introduced in 2012, have always drawn the attention of webmasters. In the past, websites which were penalized by Penguin for link spam could sometimes take a long time to recover. There were some domains which were able to change their link structure and quickly get back to normal, but there were just as many who were made to wait until the latest Penguin update – meaning, in some cases, that their punishment lasted more than 3 years…

What is Penguin 4.0?

The new version makes such delayed reactions a thing of the past. Penguin 4.0 is now, according to Google, part of the search engine’s core algorithm. This means that Penguin data, which the Google Bot identifies for certain links when crawling a website, is now updated in real time. Google describes “spam signals” which the crawler picks up – besides links these can also relate to keyword stuffing or cloaking. This means that Google now collates signals from other update paradigms, such as Panda.

Increased flexibility and real-time impact

With Penguin 4.0, Google seems to be saying a final goodbye to its practice of announcing major updates. Now that all of the most important components Google uses to evaluate websites have been incorporated into its core algorithm, they are all calculated in real time. With Hummingbird and RankBrain, the algorithm was already able to reflect a real-time understanding of user intention in the search results. Panda followed suit and now Penguin has also become part of Google’s core algorithm, which is based on data-driven machine learning. This development is taking place in line with one of the most important areas of Google’s research. In its quest for perfection in identifying the most relevant search results, Google continues to make advancements in the field of Artificial Intelligence (AI).

URL-based effects replace domain-wide penalties

Another significant development is that Penguin 4.0 is now URL-based, whilst previous iterations targeted domains as single entities. Those websites which have tidied up since previous iterations have now been released from the Penguin’s grip and been given a boost by this latest update. However, some websites, even those which have not improved the structure of their backlinks, have also seen an increase in their domain’s visibility. This is a result of Penguin 4.0 now only penalizing the ranking of individual URLs and not of the whole domain. Therefore, there are now fewer clear-cut losers, as these websites are no longer subject to a domain-wide filter.

Analysis of Penguin 4.0’s impact

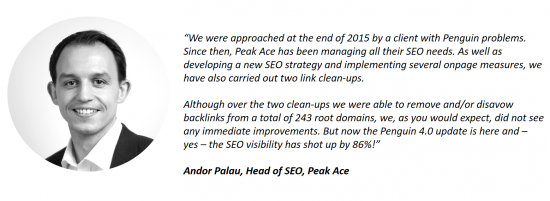

Searchmetrics’ customers have already been in touch regarding the impact of the latest update. One example is Andor Palau, Head of SEO at the Berlin-based agency, Peak Ace, which makes use of Searchmetrics’ software. He has told us about one client who approached Peak Ace with Penguin-related problems. Months after cleaning up the website’s link structure, without seeing any improvements, the rollout of Penguin 4.0 has now caused the site’s SEO visibility to jump significantly:

In our data from 16th October 2016, we can also observe the impact of Penguin 4.0’s rollout. In this post, we are refraining from posting our traditional lists of the week’s biggest winners and losers. This is because, in spite of extensive crawling on our part, it is impossible to know for certain which links have been disavowed by a website. This information is exclusively available to the SEOs or webmasters and to Google. We can say one thing: Amongst the top winners, there are numerous domains which could have had a visibility boost in connection with the Penguin update, but this remains an educated guess rather than a clear-cut certainty.

Past Penguin losers have recovered

Instead of a list of winners and losers, we have therefore selected some example domains and shown how they have been affected by the most recent Penguin update. A connection to Penguin can be established by analyzing the impact of previous Penguin updates on the domain’s visibility.

Significant drop in visibility following Penguin 3.0, increase after update 4.0

Significant drop in visibility following Penguin 3.0, increase after update 4.0

Fall in visibility after Penguin 2.0, now improved after Penguin 4.0

Fall in visibility after Penguin 2.0, now improved after Penguin 4.0

Various drops in visibility connected to Penguin (2.0, 2.1), large recovery following update 4.0

Various drops in visibility connected to Penguin (2.0, 2.1), large recovery following update 4.0

Google Bot’s increased crawling indicated upcoming update

Before real-time updating was activated, Google seems to have conducted a thorough analysis of existing link and page structures across the web. The Google Bot was seen to be far more active in its crawling in the time period just before the official announcement of the update.

Our assessment is that Google wanted to define a kind of status quo for link and page structures on the internet. This provided a valid basis for the forthcoming update, after which it could let its penguin loose in real-time.

Google Bot crawls the penalized signals from earlier Penguin updates

What seems initially surprising is that the number of crawled pages is large, and yet the download time is comparatively low, which is particularly evident in the example on the right in the image above. This could be explained by an increase in crawling of “old” URLs. Before launching the update, the Google Bot crawled the penalized signals or the specific URLs which had led to Penguin penalties in the past.

The screenshot shows the connection between an increase in crawling activity and a rise in the number of 404 errors. A non-existent page can obviously be crawled in very little time.

Post-Penguin 4.0: backlink auditing will be more difficult, but no less important

Penguin 4.0 means that the Google Bot will now mark bad links to a specific URL with a negative signal as soon as they are discovered, at least in theory. However, the negative evaluation will only affect the individual URL and not the entire domain, as was the case with previous Penguin updates.

From now on, this will make it more difficult to identify backlinks as the root cause of a downturn in visibility. If a drop in ranking is observed for a particular URL, it is advisable to optimize the page in terms of content, user intention and structure. If, in spite of these optimization measures, no improvements are detected, then the drop could be a result of the backlink profile pulling the specific URL down – without this being apparent at first or second glance. Possible causes could be having built-up a URL’s links a little too keenly, a competitor spreading bad links, or a young “SEO” happening to pick the affected URL as a target for their tests. From now on, it will therefore become more difficult to precisely determine how Google is evaluating a website’s links. What is clear, is that “negative SEO” should now become less prevalent, as domains can no longer be “shot down” by creating bad links to an “opponent’s” website.

Penguin 4.0 consigns the early SEO tactic of building up backlinks or individual keywords to the scrap heap. Long-term measures continue to grow in importance. In addition, a regular audit of a page’s backlinks should now become a fixed point in a domain’s annual SEO activity plan, to ensure adequate protection against these negative signals.

Similarly, the focus should now be on establishing a content strategy and on the production of relevant, high-quality, unique content, which perfectly serves the user intention. This is the only way of ensuring that a website is actually consumed by users, linked to, liked and shared – all of which will convince Google’s algorithm to reward you in the long term with high ranking positions.

This post was updated on 27th October 2016.