Speed Matters both for Users and SEO: Nobody wants to wait several seconds for a website to load. Users would rather leave your site and go to a competitor than spend time waiting. “Time is money,” as the old proverb says. Time also matters for Google. Speed is one of Google’s ranking factors. All things being equal, the faster the website the higher the rank. And there is nobody who will argue that having a speedy website isn’t a necessity nowadays. The question is: how to make your website faster? Our guest author Tomek Rudzki, Head of the Research and Development team at Elephate, winner of “Best Small SEO Agency” at the 2018 European Search Awards, explains that in the article below.

This is the last part of our Website Speed Guide. If you missed the first two parts, read them here: The Ultimate Guide to Website Speed – Part 1 and The Ultimate Guide to Website Speed – Part 2.

15. Let Browsers Cache your Static Resources

There is an undervalued feature of browsers – they can cache static content.

The browser saves resources like the logo, and CSS files to the cache, so if the user visits the same page again (or another page on the website), these resources can load much faster.

To let the browser cache static files, you should set properly the “Cache-control: max-age headers” on the server side.

For how long should you cache the static resources? Let me quote Google’s Developers documentation:

“We recommend a minimum cache time of one week and preferably up to one year for static assets, or assets that change infrequently. “

16. Can your Server Handle High Load and Multiple Requests from Users and Googlebot?

It may happen that your website/server is pretty fast. You open it and all the resources load within a second. But it may be really slow if a large number of users visit your page in parallel.

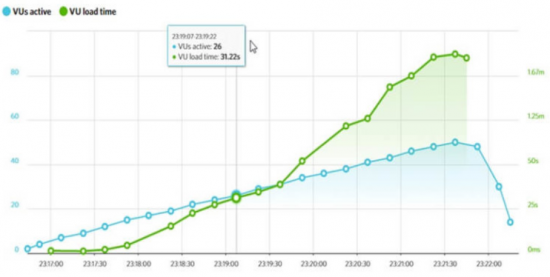

How to check? There is a helpful tool called “LoadImpact.com.” The tool emulates up to 20 users opening your website at the same time. I checked it and the Searchmetrics server works fine when many users request it.

But that’s not always the case, as you can see on the screenshot below:

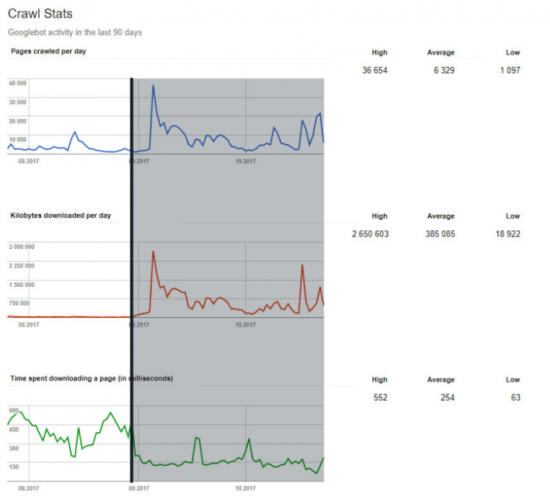

You should know that Googlebot detects if your website responds quickly. If Google perceives that requests from Googlebot slows down your server, they can request your website less frequently.

There is some evidence from Google Webmaster Blog:

At Elephate, we have observed many examples in which optimizing the page speed resulted in Google being able to crawl more pages per day.

17. Don’t Forget about Optimizing your Backend

Although in this article I’m strongly focused on the front-end performance, I really should mention about the backend.

18. Slow Database Calls

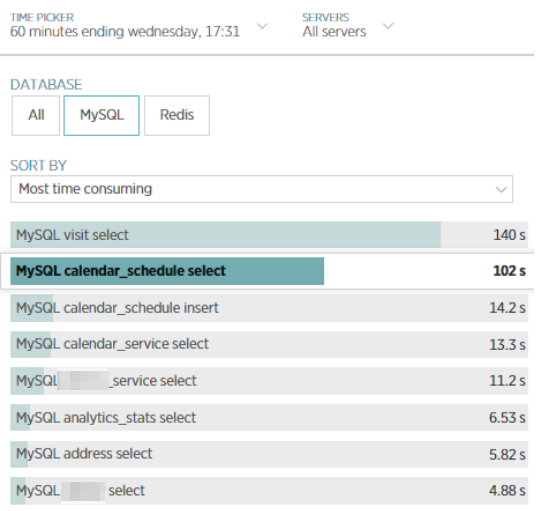

The common bottleneck in website performance is slow, un-optimized database calls. You can analyse them, using tools like NewRelic (paid):

NewRelic can give your developers invaluable insights on which database queries could be potentially optimized.

It’s also a good idea to introduce server caching, which can drastically reduce the number of expensive database calls.

19. Combining External JavaScript Files into One?

When I was “Auditing” Giphy.com in GTMetrix, I saw a piece of advice: try to combine 20 external JavaScript scripts into one:

In most cases combining multiple resources into one is a bad idea. If you combine your JavaScript into one, that will be a huge file to parse, preventing your website from being interactive for seconds.

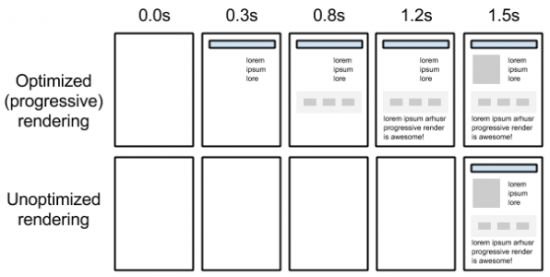

Instead, you should optimize your website for a critical rendering path. If a script is not necessary for above the fold content, what’s the point in loading it in the same time with other scripts necessary for rendering?

Courtesy: Ilya Grigorik, https://developers.google.com/web/fundamentals/performance/critical-rendering-path/

At Elephate we noticed that Google has pretty restricted timeouts. Google will probably not wait for your JS script, if it takes more than 5s to fetch and execute a JavaScript file.

If you want to read more about it, read JavaScript vs. Crawl Budget: Ready Player One by Bartosz Góralewicz

20. Use HTTP/2

For years, all users were connecting to websites through the HTTP 1.1 protocol.

It’s still very popular, but… 18 years old. When HTTP 1.1 was designed, nobody could imagine that around 40% of the world’s population would use the internet.

The modern web is evolving, so we need protocols (ways of communication) that are up-to-date, created with speed in mind. HTTP/2 is an example of such a protocol.

- There are many advantages of HTTP/2 over HTTP 1/1:

- support for “pushing” resources. If a browser requests an HTML file, you can “push” files necessary for rendering, like CSS files, primary images. Thanks to this, web pages can be rendered faster

- header compressions

- you can send multiple requests through a single TCP connection (I know, I sound geeky, but that feature really is a game changer).

All major browsers and server support HTTP/2. It degradates gracefully; if a browser (or Googlebot) doesn’t support HTTP/2, the old HTTP 1.1 protocol can be used.

Side note:

Using HTTP/2 implies using HTTPS (encrypted connections). Truth be told, HTTPS makes the web slower, but:

- Everyone is moving toward HTTPS. Even web browsers mark pages not using HTTPS as unsecured, which makes users scared.

- HTTP/2 offers many other benefits; definitely the pros outweigh the cons.

We’re done? No. We’re just at the beginning of our journey!

I have done my best to provide as much valuable information as I could to get you started. Obviously, we are at the beginning of our journey. The performance checklist is long and involved, and there are many more things to discover, such as PRPL pattern & code splitting, AMP, removing unused code, etc.

You have the foundation to start taking a critical look at the speed of your website, and more importantly, the tools to do something about it. As for the next step, I strongly recommend that you read Google Documentation on web performance. Make sure page loading speed is a part of your SEO audits.